This stage is especially challenging for Arabic and Hebrew. Both languages utilize right-to-left scripts, have letterforms that vary with position, and often appear alongside script, numbers, Latin text, and administrative marks in documents. Consequently, translating Arabic and Hebrew from images isn’t straightforward; it requires a much more meticulous process than simply uploading a file to an OCR tool and translating the output.

Why handwriting creates additional difficulties

Handwriting presents a frequent challenge, especially with Arabic, due to its cursive script. The letters connect, and their shapes change based on their position within a word. In handwritten texts, these forms often become less consistent, especially when written quickly, in a stylized manner, or unevenly. Small visual cues, such as a missing dot, a blurred stroke, or two letters written too close together, can lead the OCR system to misidentify characters. Since many Arabic letters are primarily distinguished by dots, such errors are common in handwritten notes, annotations, and forms filled out by hand.

Hebrew handwriting presents unique challenges. Unlike Arabic cursive, it features letters that can look very similar in quick or informal writing, especially in low-quality scans. OCR systems often struggle with text that is faint, compressed, or inconsistently spaced. As a result, a handwritten Hebrew note might still be understandable to someone familiar with the language, but the OCR output can be incomplete or unreliable.

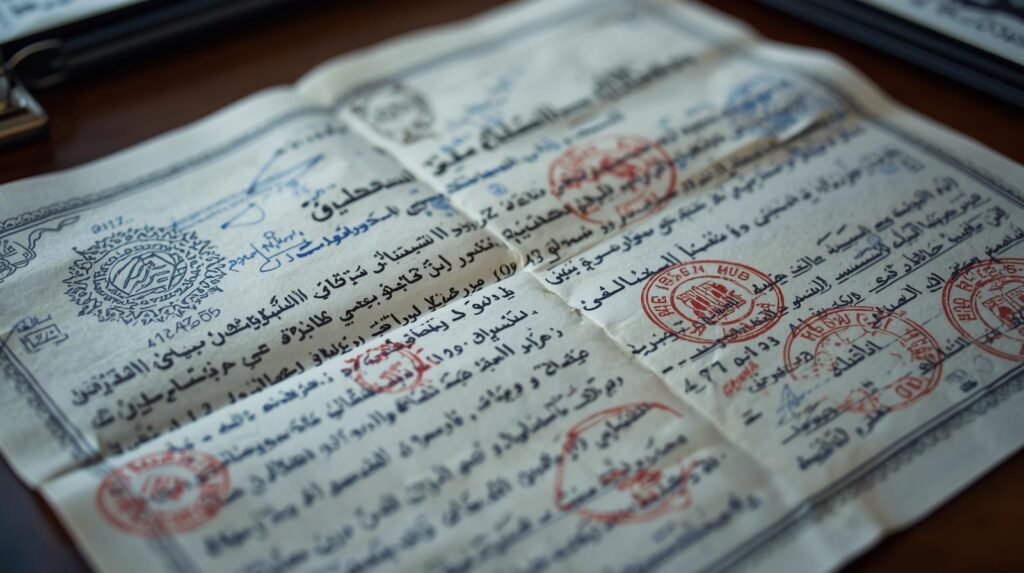

Real-world documents are rarely clean and easy to process

The challenge becomes greater because real-world documents rarely consist solely of clean text. Official materials often contain stamps, seals, logos, signatures, side notes, reference numbers, and formatting details that overlap with the main content. Arabic and Hebrew documents frequently mix right-to-left and left-to-right elements within the same line. Latin-script dates, measurements, document numbers, and names may appear inside a paragraph in ways that are clear to humans but confusing for OCR systems. Consequently, the extracted text can be incomplete, misordered, or distorted even before translation begins.

OCR tools can help, but they are only a starting point

That’s why OCR results for Arabic and Hebrew should be approached carefully. Tools like Google Lens can help with quick verifications, particularly when images are clear and text is printed rather than handwritten. They are quick, easy to access, and handy for straightforward cases. Nonetheless, for professional translation tasks, they are usually insufficient on their own.

Their performance noticeably declines when the image includes handwritten elements, overlapping stamps or seals, low contrast between text and background, or a more complex layout with multiple text directions. In simple cases, such tools can offer a helpful initial version of the text. However, for important documents, the output still requires careful review.

Why translating directly from the image is sometimes the safest option

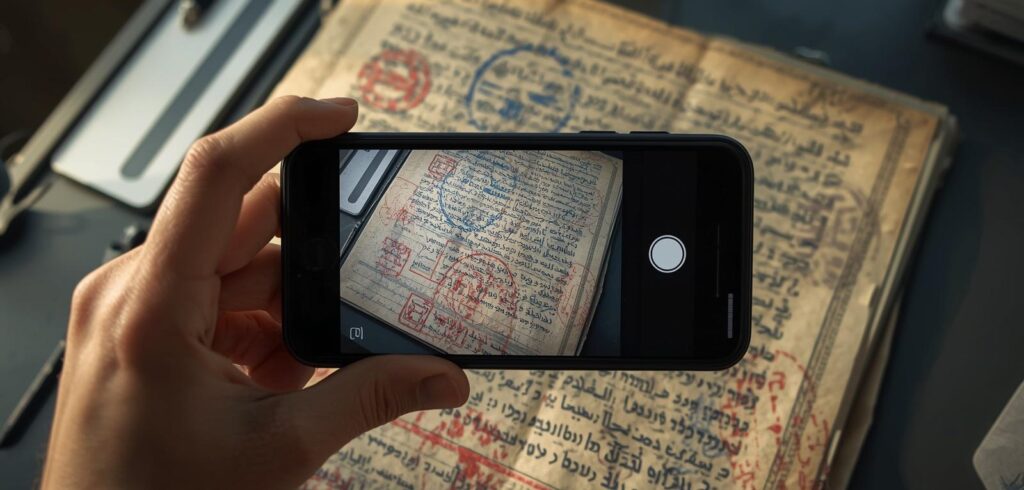

In certain cases, the best approach isn’t to depend entirely on OCR output. When image quality is low, the document contains handwritten parts, or the layout is too intricate for automated recognition, a translator might work directly from a photograph or a scan. In these situations, OCR can be helpful, but the translation mainly relies on a close examination of the original image.

This approach is especially useful for Arabic and Hebrew, where small visual nuances can alter a word’s meaning. Automated systems might overlook or misread these details. Handling the original document allows translators to verify unclear sections, interpret handwritten parts more precisely, and confirm that the translation accurately reflects the original content, rather than relying on imperfect recognition software results.

Why text extraction must be followed by review and correction

Even with OCR tools, a thorough review remains crucial. In a dependable workflow, OCR merely starts the process. After text extraction, it must be cleaned and compared to the original image. Here, language expertise is vital. A translator working with Arabic or Hebrew needs to identify OCR errors, such as letter confusion, missing words, misinterpreted numbers, or improper text segmentation.

It only makes sense to proceed to the translation stage after the extracted text has been corrected, whether the translation is performed entirely by a human or with machine translation used solely as support.

This order is crucial because OCR errors often persist throughout the entire process. If the source text is flawed, all subsequent stages become less dependable. For example, a mistranscribed name might be mistranslated later, and an incorrect date could appear correct in the final version while still being factually wrong. Even a small mistake in a handwritten note can alter the meaning of a key sentence. This risk is especially high in Arabic and Hebrew, where script-specific details are often critical.

Why language-specific experience still matters

Effective collaboration between Arabic and Hebrew requires understanding their scripts both theoretically and in practice. It also requires recognizing errors caused by automated tools and correcting them promptly to prevent them from impacting the translation.

Accuracy depends not only on the tool, but on the review behind it

As the use of image-based content grows, this type of work is becoming more prevalent. Screenshots, scans, mobile photos, and handwritten notes are now common in daily translation tasks. However, for Arabic and Hebrew, these formats are especially challenging. The complexity of the scripts, mixed-direction content, and OCR limitations mean that achieving accuracy relies not just on the tools but also heavily on the reviewer.

In complex scripts, converting an image to translation is rarely straightforward. It requires careful reading, strong linguistic judgment, and experience with document types that automated systems still find difficult to interpret accurately.

Check our social media: Facebook / LinkedIn

If you want to read about previous years with MD Online, click here!

Leave a Reply